The Anthropic Effect

When knowledge stops being scarce, everything gets repriced. Last week, markets found out

Dear readers, good morning.

This one has been a long time coming, so a quick heads-up before we start: this is a longer read than our usual Saturday debrief. There’s just too much to unpack, and I’ve tried to compile it all in the simplest way possible.

AI has been sitting in the background of almost everything we talk about, markets, jobs, geopolitics, productivity, etc. Always present, yet strangely hard to pin down. Too technical in one place, too alarmist in another, too optimistic somewhere else.

Last week, after a series of long reads, market moves, and a few viral moments, my thoughts finally converged in a way that felt coherent enough to share. What follows is my attempt to make sense of what’s actually happening, in real time.

The piece that started it all

The catalyst was a Substack article by Citrini Research, a small macro boutique, that quietly went viral and ended up generating over 20 million views. Their piece imagined a “Global Intelligence Crisis” set in 2028, written from the future, looking back at how things unravelled. As I read it, one thought kept coming back: this doesn’t feel like a warning about 2028. It feels like a description of what’s already started to happen.

Citrini’s core argument is not that AI fails or spins out of control. It’s that AI works, extremely well, and that success is what creates the problem. How?

—> Output keeps growing and corporate profits hold up. But employment, wages, and consumer spending struggle to keep pace. Growth shows up in the statistics without showing up in people’s lives.

They call this “ghost GDP”: an economy that looks healthy on paper but is hollowing out underneath.

Their fictional 2028 memo captures it vividly: markets soaring, productivity booming, record corporate earnings, while real wage growth collapses and white-collar workers get pushed into lower-paying roles. The statistics look okay but the economy underneath doesn’t1. And critically, Citrini’s piece wasn’t meant as a prediction, their own preface said the intent was to explore a scenario that had been “relatively underexplored.” The market, apparently, hadn’t explored it either.

The Market’s first reality check

Here is where things got uncomfortable, and fast.

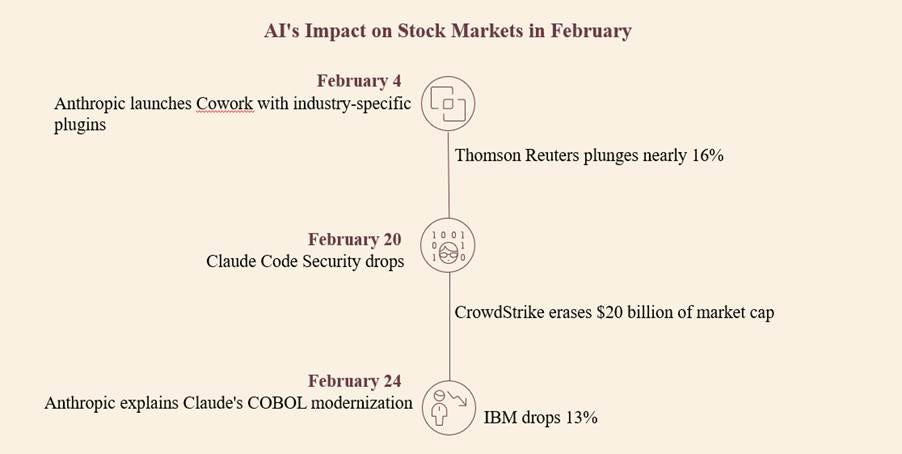

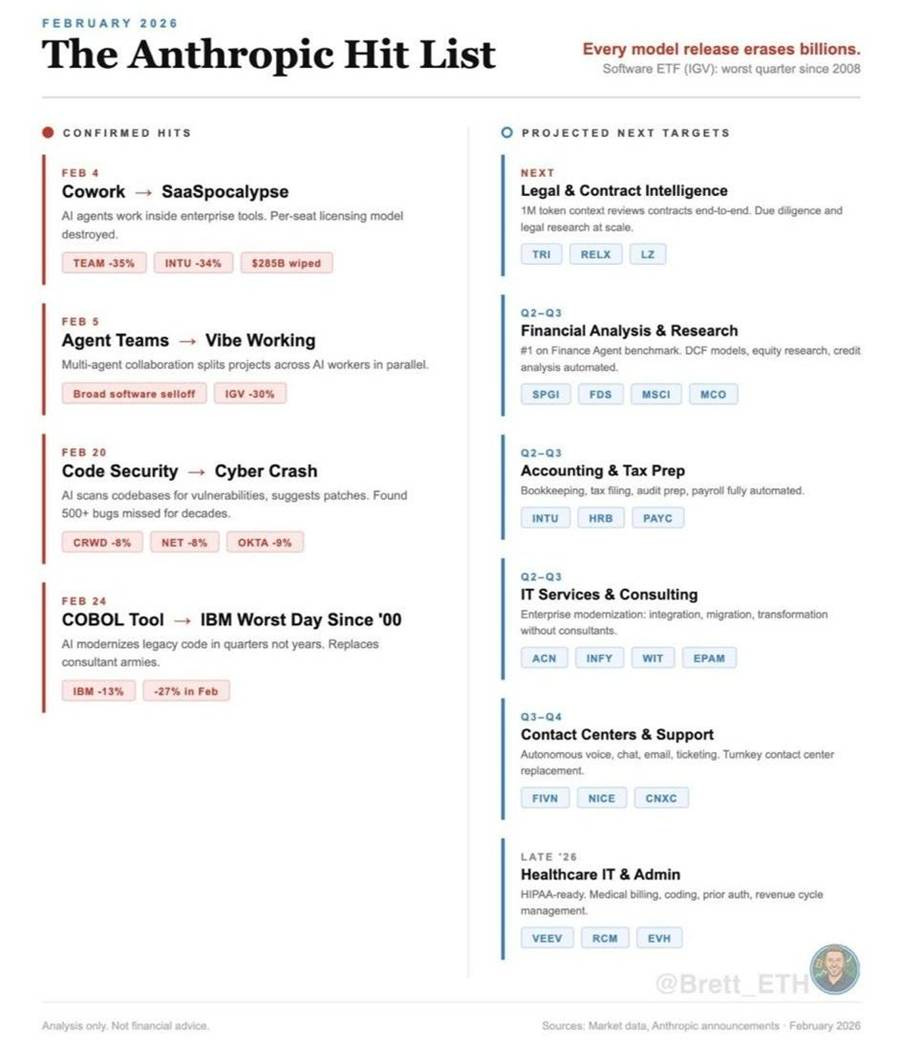

The same week Citrini published, markets were handed a live demonstration of exactly what that future might feel like. Analysts started calling it the “Anthropic Effect”: every time Anthropic releases a significant capability update, the sectors that AI could plausibly disrupt get hammered, often regardless of whether the threat is immediate or even fully real.

The confirmed hits, just in February 2026 alone, see below:

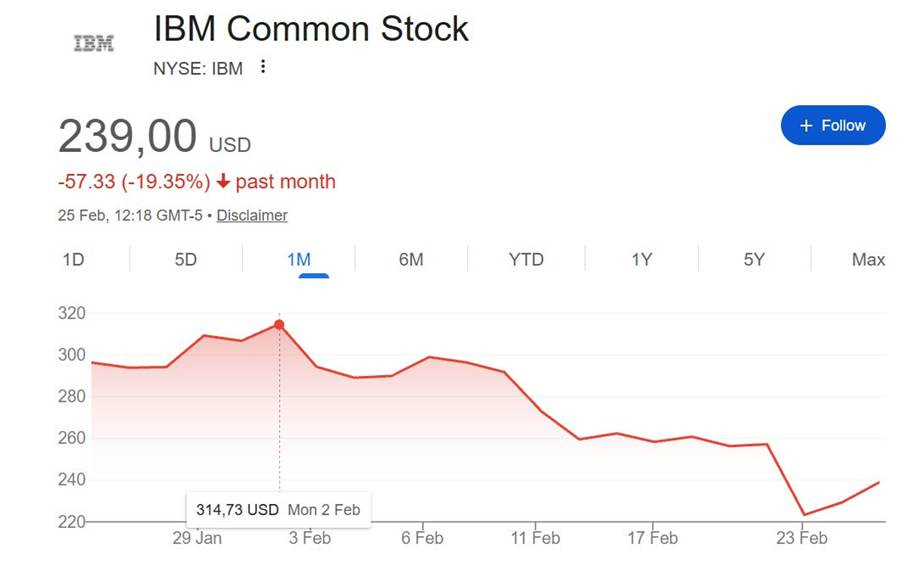

That last one deserves a moment. IBM’s core business is about a language nobody else could read: COBOL runs 95% of ATM transactions in America. Hundreds of billions of lines of power banking, airlines, and government systems. The engineers who built it retired decades ago, and the knowledge walked out the door with them. Entire consulting empires existed because the code was too old, too tangled, and too critical to touch. Companies paid IBM billions because the alternative was catastrophic system failure. Then, Anthropic published a blog post saying Claude could map dependencies, document workflows, and translate the whole thing into modern languages in quarters, not years.

That same week. Goldman Sachs’ basket of companies most exposed to AI disruption fell to its lowest level since November 2016. And if you’re wondering how far the “hit list” might extend, analysts are already pointing to legal and contract intelligence, financial analysis, accounting and tax prep, consulting, and healthcare administration as projected next targets.

What history tells us instead

The selloff was significant enough that among other market makers, Citadel Securities (one of the most influential on Wall Street) published a direct response to calm the markets. Their message: just because AI can improve itself doesn’t mean it will be deployed at the same speed. Technological diffusion always follows an S-curve: slow at first, then accelerating, then plateauing as organizations struggle to integrate and regulations catch up. Job postings for software engineers are actually rising. New business formation is expanding. And their Keynes reference lands like a cold splash of water: in 1930, he predicted that rising productivity would mean we’d only work 15 hours a week by now. He was right about productivity. He was completely wrong about what humans would do with it, we simply wanted more2.

Here’s why that argument holds up. The instinctive fear goes something like this: AI replaces jobs, people have less money to spend, businesses cut more costs through more AI, repeat until collapse. But this story assumes something that history keeps proving wrong: that there’s only so much economic activity to go around, and technology just reshuffles who gets it.

Think about what happened when the internet arrived. Travel agents disappeared, and so did the cost of booking a flight, but an entire industry of online travel, reviews, and experiences exploded in its place. When ATMs arrived, people predicted the end of bank tellers. Instead, banks opened more branches because ATMs made branches cheaper to run, and teller roles shifted toward sales and advice.

The pattern repeats every time a fundamental cost collapses: demand doesn’t shrink, it transforms. New things become possible that simply weren’t before.

That’s the other way to read what’s happening now. When the cost of legal advice falls, more people can afford a lawyer. When accounting software stops costing a fortune, more small businesses get started. When customer support becomes cheaper to run, companies can serve customers they previously couldn’t afford to reach. The Kobeissi Letter calls this “Abundance GDP”: growth that quietly lowers the cost of everyday life for everyone, not just those at the top3.

Early technology, slow change

Here’s something worth sitting with before you spiral: look around at your own workplace.

How many of your colleagues are genuinely, deeply using AI every day? Not experimenting with it once, not having ChatGPT write an email, but actually restructuring how they work around it? If you’re being honest, it’s probably a small minority. Maybe even just you.

That’s not an accident. Transformative technologies take a surprisingly long time to actually transform things. The steam engine existed for decades before it reshaped the economy. Factories kept running on steam long after electrical power was available, simply because rewiring a factory is expensive and disruptive. The internet was “the future” for years before most businesses actually changed how they operated because of it.

AI is real. The disruption is real. But the gap between a technology existing and a technology reshaping society is always longer and messier than the headlines suggest. The data backs this up. Right now, fewer than 1 in 10 US companies are using AI in their actual production processes. Nearly half of companies that launched AI projects last year abandoned them4. The productivity gains from transformative technologies are neither automatic nor evenly distributed. They arrive after the chaos, once organizations have restructured, workers have retrained, and the infrastructure has caught up. We are nowhere near that point yet.

Who controls intelligence now matters

There is one more layer that most coverage misses.

The same week IBM was collapsing and COBOL was being democratized, Anthropic also published evidence that three Chinese AI labs had run 24,000 fake accounts and 16 million exchanges specifically to steal Claude’s capabilities. DeepSeek used what it extracted to build censorship tools5. And the Pentagon summoned Anthropic for a briefing.

So, are we still talking about a productivity-only story? Anthropic’s growing relevance sits at the center of a strategic triangle: the US, China, and the state itself (military + govt). Questions about who controls AI models, the data that trains them, and the infrastructure that runs them, are driving policy in Washington and Beijing alike. The military interest is also about strategic advantage in a world where cognitive capability is becoming one of the most essential resources.

Where does this leave us?

Here is my honest answer: nobody knows exactly how this plays out. But we can say something about where we are: We are mid-transition. And transitions are always messy, unequal, and uncomfortable especially for the people caught in the middle of them.

The technology is moving too fast while people are moving slower. That gap, between what AI can now do and how quickly the system can adapt, is where most of the pain lives right now. If your work or business relies on routine knowledge staying scarce, the pressure is real and preparation matters more than panic; if you’re building or investing, falling cognitive costs also lower barriers and create new room to move.

So, this isn’t the end of work, but the end of a world where knowledge stayed expensive by default. What replaces it is still being written, which is precisely why this moment feels both unsettling and full of opportunity.

Citrini Research, “The 2028 Global Intelligence Crisis” via Substack

Citadel Securities, “The 2026 Global Intelligence Crisis”

The Kobeissi Letter, “It’s Too Obvious. What If AI Doesn’t Actually End The World?” via X

Santander Macro Research, “The macroeconomic effects of artificial intelligence”

Anthropic via X

Super interesting!

Thanks for sharing your analysis, Celine! Great article to read as a break from my distress regarding the catastrophic events in the Middle East. I’m sure there is a WIP on that, and I look forward to reading it, even though I expect it will not make me feel any better…